Seoul National University Hospital develops two AI models specialized in medical image interpretation and clinical reasoning and releases them globally… achieving top-tier performance

-X-ray interpretation AI that compares past and current images to detect lesion changes, supporting patient explanation and emergency decision-making

- Medical reasoning AI that integrates medical history and records of patients with complex symptoms to present the reasons of differential diagnoses and additional tests, utilized for clinical assistance.

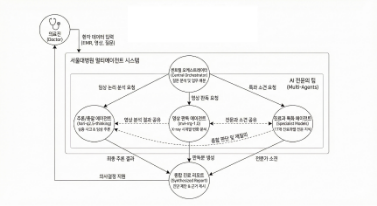

[Figure] Multi-agent medical AI architecture of Seoul National University Hospital, where image interpretation AI and clinical reasoning AI analyze patient images and records, respectively, and integrate the results to assist clinical decision-making

Seoul National University Hospital (President Young-Tae Kim) announced on the 9th that it has released two medical-specialized artificial intelligence (AI) models developed by its Healthcare AI Research Institute as open source to the global community.

The models released are ‘mvl-rrg-1.0,’ an image interpretation AI that analyzes chest X-ray images and generates radiology reports, and ‘hari-q2.5-thinking,’ a large language model specialized in medical reasoning.

Both models are medical-specialized AI systems designed to assist the decision-making processes performed by clinicians in real-world clinical settings, and are applied to medical image interpretation and text-based clinical reasoning, respectively.

This achievement was made possible through support from the Ministry of Science and ICT’s “AI Computing Support Project for Research.” Seoul National University Hospital was selected for this program in recognition of its capability to independently develop large-scale AI models in the medical field and to publicly release research outcomes at a global level.

Through this project, the hospital was provided with 64 H200 GPUs (approximately 4 PFLOPS of computing performance), enabling the establishment of a high-performance training environment for advanced AI models based on large-scale medical data. This allowed efficient training and validation of large-scale medical AI models integrating text and medical imaging.

The image interpretation model, ‘mvl-rrg (radiology report generation)-1.0,’ is an AI system that automatically generates radiology reports by analyzing chest X-ray images. Beyond single-image analysis, the model is designed to connect past and current patient images to reflect “temporal changes,” such as disease progression or improvement. It was trained on over 360,000 publicly available medical imaging datasets to infer patterns of lesion changes.

As a result, even under the condition of inputting only current images, the model recorded ROUGE-L 34.1 and BLEU-4 18.6—standard natural language generation metrics—placing its performance among the top-tier globally in the field of automated chest X-ray report generation

This model can be utilized to reduce the burden of image comparison and interpretation in outpatient clinics and emergency departments. In clinical settings, it automatically compares past and current images to quantitatively present changes in lesions, thereby helping clinicians explain treatment progress to patients. In time-critical environments such as emergency rooms, it can rapidly identify findings that require immediate intervention, such as pneumothorax, immediately after X-ray acquisition, assisting clinicians in initial decision-making.

The text-based medical AI, ‘hari-q2.5-thinking,’ is designed to understand clinical scenarios and perform reasoning necessary for diagnosis and treatment processes. The model demonstrated its medical reasoning capability by achieving an 89% accuracy rate on a mock test of the Korean Medical Licensing Examination (KMLE).

‘hari-q2.5-thinking’ can assist clinicians in cases where multiple symptoms are present and the underlying cause is difficult to determine. For example, in a patient presenting with cough, abdominal pain, and headache simultaneously, the model goes beyond simple symptom classification and considers the patient’s past medical history and clinical records to systematically suggest possible differential diagnoses and the need for additional tests. It can also be used as an educational tool for medical students and residents by explaining the logic behind clinical decision-making.

Based on these models, Seoul National University Hospital is promoting expansion into department-specific models across 17 clinical departments, including internal medicine, surgery, and pediatrics. Currently, a multi-agent system is being built in which multiple AI models divide roles to assist in decision-making Once clinical validation is completed, the hospital plans to sequentially release department-specific models to expand the research infrastructure for medical AI accessible to clinicians and researchers worldwide.

Dr. Hyeong-Cheol Lee(Vice Director of the Healthcare AI Research Institute) stated, “With a high-performance GPU infrastructure, it has become possible to conduct medical AI research that jointly learns and reasons over text and medical imaging. In particular, the ability to compare past and current images to identify changes in a patient’s condition is a critical factor in real clinical decision-making, and we expect the models released in this study to be effectively utilized in supporting clinicians’ decision-making processes.”

Meanwhile, Seoul National University Hospital has released the developed medical AI models on the Korea Health Data Platform (KHDP), a national strategic technology data platform, as well as on the global AI platform Hugging Face.

■ Open-source Model Links

- Multi-modal model (mvl-rrg-1.0):

https://khdp.net/database/data-search-detail/736/mvl-rrg-1.0/1.0.0

https://huggingface.co/snuh/mvl-rrg-1.0

- Text model (hari-q2.5-thinking):

https://khdp.net/database/data-search-detail/735/hari-q2.5-thinking/1.0.0

https://huggingface.co/snuh/hari-q2.5-thinking

[Photo] Exterior view of Seoul National University Hospital